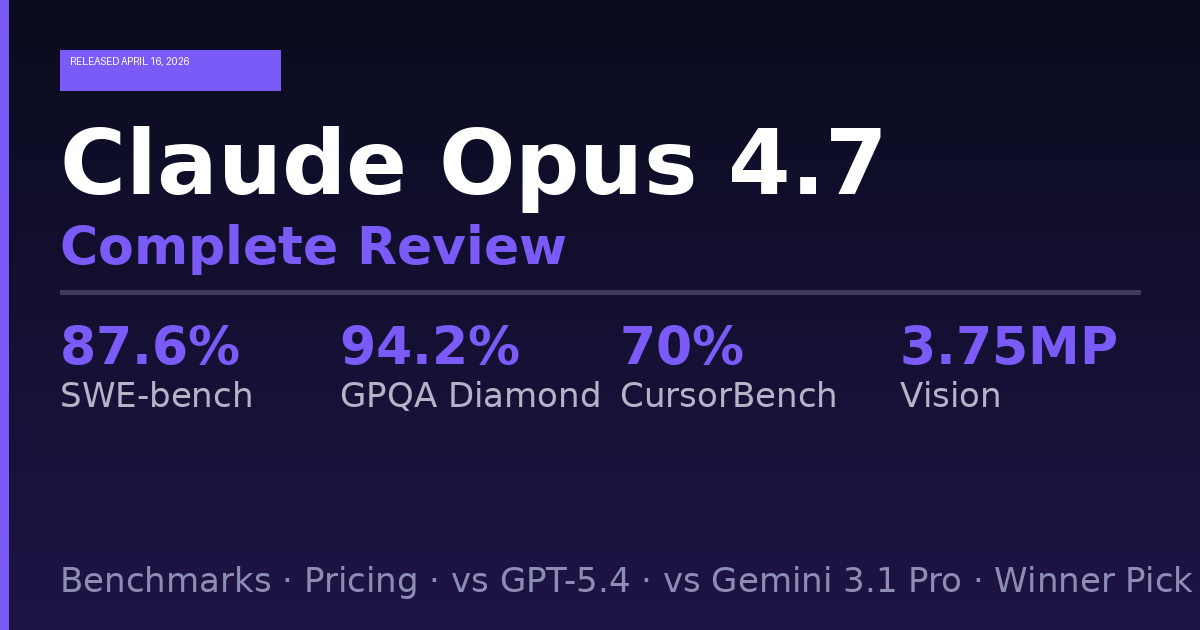

Claude Opus 4.7

Full Review, Benchmarks, Pricing, and Winner Pick (April 2026)

Anthropic shipped Claude Opus 4.7 on April 16, 2026, roughly two months after Opus 4.6 landed in February. The model ID is claude-opus-4-7. It is available today on Claude.ai, the Claude API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. GitHub Copilot began rolling it out to Copilot Pro+, Business, and Enterprise users on the same day.

This is not a generational overhaul. It is a targeted, high-impact upgrade across the four areas developers and enterprise teams have pushed hardest on: coding, vision, long-horizon agentic reliability, and instruction precision. The price did not change. The capabilities did.

This article covers everything that actually matters: all confirmed benchmark figures, a full pricing breakdown, a comparison table against GPT-5.4 and Gemini 3.1 Pro, what the community is saying on X and Reddit, and a clear winner pick by use case.

How We Got Here: The Leak, the Market Reaction, and the Prediction Markets

The backstory around this launch is worth knowing because it illustrates how Anthropic’s release cadence has become one of the most closely tracked in the industry.

In March 2026, a misconfigured npm package briefly exposed over 500,000 lines of Claude Code source code. The leak included internal references to Opus 4.7 and Sonnet 4.8 in something called the “Undercover Mode” forbidden-version-strings list, strings the model is trained not to mention publicly. Also referenced in the same files: models codenamed Mythos and Capybara.

On April 14, The Information published an exclusive reporting that Anthropic was preparing Opus 4.7 alongside a prompt-based AI design tool for websites and presentations, potentially shipping “as soon as this week.” Within hours, FIG (Figma), ADBE (Adobe), WIX, and GDDY (GoDaddy) all traded 2 to 4 percent lower. Analysts attributed the drop to competitive pressure from the reported design tool.

On X, @aakashgupta put it simply: “A product that doesn’t exist yet just vaporized billions.” That post got traction because it captured something real. Anthropic entering the AI design market, even as a rumor, was enough to reprice four publicly listed companies.

Polymarket traders assigned a 79 percent implied probability to an April 16 launch. They were right. Opus 4.7 went live April 16 evening. The AI design tool did not ship and is still pending.

Anthropic’s own Boris Cherny noted on X: “Internal experiments often never make it to release. Code references are roadmap signals, not launch guarantees.” He was right to add the caveat. But this one shipped.

What Actually Changed: The Seven Core Upgrades

1. Coding Benchmarks, Substantially Up

On SWE-bench Pro, Opus 4.7 scores 64.3 percent, up from 53.4 percent on Opus 4.6, and ahead of GPT-5.4 at 57.7 percent and Gemini 3.1 Pro at 54.2 percent. On SWE-bench Verified, the curated subset, the score is 87.6 percent, compared with 80.8 percent for Opus 4.6 and 80.6 percent for Gemini 3.1 Pro. OfficeChai

CursorBench, which measures autonomous coding performance inside the popular AI code editor, shows a similar jump: 70 percent, up from 58 percent on Opus 4.6. The Next Web

On an internal 93-task coding benchmark run by Hex, Opus 4.7 lifted resolution by 13 percent over Opus 4.6, including four tasks neither Opus 4.6 nor Sonnet 4.6 could solve. Anthropic

Cursor responded to the launch by announcing 50 percent off for Opus 4.7 adoption. For context, Claude Code alone had reached $2.5 billion in annualized revenue in February 2026, and AI-assisted coding has become one of the fastest-growing categories in enterprise software spend. The Next Web

2. Vision, Tripled in Resolution

Previous Claude models capped image input at 1,568 pixels on the long edge, about 1.15 megapixels. Opus 4.7 raises that to 2,576 pixels on the long edge, about 3.75 megapixels. Screenshots, design mockups, documents, and photographs come through at higher fidelity. Coordinate mapping is now 1:1 with actual pixels, eliminating the scale-factor math that computer-use workflows previously required. Apidog

Vision at high resolution is a materially different model. Opus 4.7 at full resolution scores 79.5 percent on visual navigation without tools versus 57.7 percent for Opus 4.6. The-ai-corner That is a 21.8 point jump on the same benchmark from a resolution change alone.

Improvements are concentrated in low-level perception tasks: pointing, measuring, and counting, as well as bounding-box detection and natural-image localization.

3. The xhigh Effort Level

Opus 4.7 introduces a new xhigh effort level between high and max, giving users finer control over the tradeoff between reasoning and latency on hard problems. In Claude Code, the default effort level has been raised to xhigh for all plans. Anthropic

At every effort level, Opus 4.7 outperforms Opus 4.6’s equivalent. The new xhigh at 100,000 tokens scores 71 percent, already ahead of Opus 4.6’s max at 200,000 tokens. The-ai-corner

That is meaningful math. You get better coding results at Opus 4.7 xhigh while spending half the thinking tokens compared to Opus 4.6 max.

4. Task Budgets (Public Beta)

Task budgets are a way to give the model a rough token target for an entire agentic loop rather than a single turn. The model sees a running countdown and uses it to prioritize work, skip low-value steps, and finish gracefully as the budget runs out. Apidog

Task budgets are advisory, not hard caps. The minimum is 20,000 tokens. They address one of the most common failure modes in production agents: runaway token consumption on tasks that do not warrant deep exploration.

5. The /ultrareview Command in Claude Code

Claude Code introduces /ultrareview, a dedicated command for in-depth code review sessions. This is not a simple code check. It is a structured session that analyzes architecture, security, performance, and maintainability. Pasquale Pillitteri

Pro and Max users get three free ultrareviews per billing cycle. Additional reviews consume tokens at standard Opus 4.7 rates. Nxcode

For teams that currently run manual code review checklists or pay for dedicated security audit tooling, this is a direct functional replacement for routine audits.

6. Stricter Instruction Following

The model will not silently generalize an instruction from one item to another or infer requests you did not make. This is especially noticeable at lower effort levels. Response length adapts to task complexity rather than defaulting to a fixed verbosity. The model uses reasoning more and tools less. The tone is more direct and opinionated, with less validation-forward phrasing than Opus 4.6. Fello AI

This is a double-edged change. Prompts tuned for Opus 4.6’s looser interpretation will need adjustment. But systems that relied on the model extrapolating intent get much more predictable behavior.

7. Updated Tokenizer and Expanded Knowledge Cutoff

The knowledge cutoff moved from May 2025 to January 2026, giving Opus 4.7 eight more months of training data. Apiyi.com Blog

Opus 4.7 uses a new tokenizer compared to previous models, which may use up to 35 percent more tokens for the same fixed text. Claude API Docs Per-token pricing is unchanged, but effective cost per request can rise depending on content type. This is the most important migration detail for anyone running Opus at scale.

X and Reddit Community Reactions

The reaction on X within hours of the launch was overwhelmingly positive, particularly from developers who work in Cursor and Claude Code daily.

Several engineers posted immediate first impressions. The consensus themes: the jump in agentic reliability is the most noticeable change in practice, not just on paper. Multiple users noted that tasks which used to stall or require manual intervention completed without prompting. One Cursor power user on X described it as “the first time I felt comfortable walking away from a long refactor and trusting the output.”

The vision upgrade got significant attention from users doing document extraction and UI automation work. Several X accounts in the computer-use and RPA space called the 2,576px ceiling “the thing that finally makes screenshot-based automation viable at production quality.”

On Reddit, the r/ClaudeAI and r/MachineLearning communities were active within the first few hours. The dominant thread concerns were two things. First, the tokenizer change and its cost implications. Multiple developers flagged that their existing cost estimates for Opus-based agents need to be recalculated before switching production traffic. The concern is legitimate: if you are currently spending $1,000 per month on Opus 4.6, expect $1,000 to $1,350 per month on the same workload with Opus 4.7 before any optimization. Nxcode

Second, the breaking API changes. Developers who set temperature, top_p, top_k, or used extended thinking budgets directly found that those calls now return 400 errors on Opus 4.7. The migration path is to switch to adaptive thinking and remove sampling parameters, but it caught people off guard.

The GitHub Copilot launch also generated discussion. Opus 4.7 is rolling out on GitHub Copilot with a 7.5 times premium request multiplier as part of promotional pricing until April 30th. GitHub Some users on Reddit found the multiplier steep for casual use and said they would wait for it to normalize.

Full Benchmark Table: Opus 4.7 vs GPT-5.4 vs Gemini 3.1 Pro vs Opus 4.6

| Benchmark | Claude Opus 4.7 | Claude Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|---|

| SWE-bench Verified | 87.6% | 80.8% | N/A listed | 80.6% |

| SWE-bench Pro | 64.3% | 53.4% | 57.7% | 54.2% |

| CursorBench | 70% | 58% | N/A | N/A |

| GPQA Diamond | 94.2% | ~90.5% | 94.4% | 94.3% |

| BigLaw Bench (Harvey) | 90.9% | lower | N/A | N/A |

| BrowseComp | 79.3% | 83.7% | 89.3% | N/A |

| Terminal-Bench 2.0 | 69.4% | N/A | 75.1% | N/A |

| Visual Navigation (no tools) | 79.5% | 57.7% | N/A | N/A |

| Mythos Preview (SWE-bench Pro) | N/A | N/A | N/A | 77.8% (Anthropic internal) |

Sources: Anthropic official announcement, TNW, officechai.com, the-ai-corner.com, April 2026.

Several things stand out in this table.

The weak spots are worth noting. BrowseComp dropped from 83.7 percent on Opus 4.6 to 79.3 percent on Opus 4.7, with GPT-5.4 Pro at 89.3 percent holding clear leadership for agentic web search. Terminal-Bench 2.0 at 69.4 percent trails GPT-5.4’s self-reported 75.1 percent result. Teams running production web research pipelines should test both Opus 4.7 and GPT-5.4 before switching. Digital Applied

The context window remains at one million tokens, half of Gemini 3.1 Pro’s two million, but sufficient for most enterprise use cases. The Next Web

What Real Partners Are Reporting

Anthropic published partner quotes in their official announcement. These are the most credible real-world performance data available at launch:

CodeRabbit, a code review platform: recall improved by over 10 percent, surfacing some of the most difficult-to-detect bugs in their most complex pull requests, while precision remained stable despite the increased coverage. It is a bit faster than GPT-5.4 xhigh on their harness. Anthropic

Cursor: Opus 4.7 is a clear step up over Opus 4.6: plus 14 percent over Opus 4.6 at fewer tokens and a third of the tool errors. It is the first model to pass their implicit-need tests, and it keeps executing through tool failures that used to stop Opus cold. Anthropic

Hex (data analytics platform): Hex found that low-effort Opus 4.7 is roughly equivalent to medium-effort Opus 4.6, meaning users get higher capability at lower compute spend once calibrated. Anthropic

Genspark’s Super Agent team called out three production metrics: loop resistance, consistency, and graceful error recovery. Opus 4.7 achieves the highest quality-per-tool-call ratio they have measured. Anthropic

Harvey (legal AI): Opus 4.7 demonstrates strong substantive accuracy on BigLaw Bench, scoring 90.9 percent at high effort, with better reasoning calibration on review tables and smarter handling of ambiguous document editing tasks. It correctly distinguishes assignment provisions from change-of-control provisions, a task that has historically challenged frontier models. Anthropic

Pricing: Complete Breakdown

Anthropic held pricing flat from Opus 4.6.

Claude API Pricing for Opus 4.7

| Cost Item | Rate |

|---|---|

| Input tokens (standard) | $5.00 per million tokens |

| Output tokens | $25.00 per million tokens |

| Prompt cache writes | Lower than standard input |

| Prompt cache hits | Up to 90% discount on input |

| Batch processing | 50% discount |

| US-only inference (1P API) | 1.1x multiplier on all categories |

| Global routing | Standard pricing (default) |

For business users and consumers who want to collaborate on complex tasks, Opus 4.7 is available on Claude for Pro, Max, Team, and Enterprise plans. Anthropic

Claude Subscription Plans (claude.ai)

| Plan | Monthly Price | Opus 4.7 Access | Claude Code |

|---|---|---|---|

| Free | $0 | No | No |

| Pro | $20 | Yes | Limited |

| Max 5x | $100 | Yes | Yes (5x usage) |

| Max 20x | $200 | Yes | Yes (20x usage, Auto mode) |

| Team | Custom | Yes | Yes |

| Enterprise | Negotiated | Yes | Yes |

GitHub Copilot (Opus 4.7)

Opus 4.7 is launching on GitHub Copilot with a 7.5 times premium request multiplier as part of promotional pricing until April 30th. It is available to Copilot Pro+, Business, and Enterprise users. GitHub

The Real Cost Warning: The Tokenizer

The new tokenizer may use up to 35 percent more tokens for the same fixed text. Claude API Docs Anthropic specifies this is a range of 1.0 to 1.35x depending on content type. The per-token price is identical, but effective spend per request can be materially higher.

Practical calculation: if you send a system prompt and context that previously consumed 10,000 input tokens, the same text could now register as 10,000 to 13,500 input tokens. At $5 per million tokens, the difference is small per call, but it compounds quickly at scale. Enterprise teams running millions of calls per day should re-benchmark before switching production traffic.

Prompt caching remains the most effective lever. On cached input, the effective input cost drops to roughly $0.50 per million tokens, meaning the tokenizer’s 35 percent overhead costs almost nothing on repeat system prompts.

Model Comparison: Opus 4.7 vs Opus 4.6 vs Mythos Preview

The Claude model landscape in April 2026 has a clear hierarchy. Mythos Preview is the most capable model Anthropic has ever built, and it breaks benchmarks across the board. But it is locked behind Project Glasswing for good reason: the cybersecurity implications are too significant for unrestricted release. Unless you are defending critical infrastructure, this model is not accessible to you. Nxcode

Opus 4.7 is the model most developers should use starting today. Opus 4.6 is the safe choice for production systems that are already working well, where the tokenizer change and stricter instruction following would require significant re-tuning work before migrating. Nxcode

On SWE-bench Verified, Mythos Preview scores 93.9 percent, a 13.1 point jump over Opus 4.6’s 80.8 percent. For context, the gap between GPT-4 and GPT-4o on SWE-bench was about 5 points. Nxcode Mythos is a different tier of capability entirely. It sits above Opus 4.7 on every benchmark Anthropic has published.

Opus 4.7 vs GPT-5.4: Head-to-Head

Coding (SWE-bench Pro): Opus 4.7 wins at 64.3 percent versus GPT-5.4’s 57.7 percent. The gap is 6.6 points, meaningful for teams where coding is the primary workload.

Graduate-level reasoning (GPQA Diamond): GPT-5.4 Pro edges it at 94.4 percent versus 94.2 percent. Within statistical noise.

Agentic web search (BrowseComp): GPT-5.4 wins clearly at 89.3 percent versus Opus 4.7’s 79.3 percent. If your agent needs to browse and synthesize web content at scale, GPT-5.4 is the stronger choice right now.

Terminal operations (Terminal-Bench 2.0): GPT-5.4 leads at 75.1 percent versus 69.4 percent.

Code review speed: CodeRabbit reports Opus 4.7 is faster than GPT-5.4 xhigh on their code review harness. Anthropic

Legal reasoning (BigLaw Bench): Opus 4.7 at 90.9 percent has no published GPT-5.4 equivalent for direct comparison, but Harvey’s team selected Opus 4.7 for their primary workload based on internal testing.

Vision (image resolution and navigation): Opus 4.7 now processes at 3.75 megapixels versus prior Claude models’ 1.15 megapixels, scoring 79.5 percent on visual navigation without tools. No equivalent GPT-5.4 score is published.

Pricing (API): Identical at $5 input / $25 output per million tokens. There is no cost advantage to choosing one over the other at the API level.

Opus 4.7 vs Gemini 3.1 Pro: Head-to-Head

Coding (SWE-bench Verified): Opus 4.7 at 87.6 percent versus Gemini 3.1 Pro’s 80.6 percent. Meaningful 7-point gap.

Coding (SWE-bench Pro): 64.3 percent versus 54.2 percent. Ten point gap in Opus 4.7’s favor.

GPQA Diamond: Effectively tied. Gemini 3.1 Pro at 94.3 percent, Opus 4.7 at 94.2 percent.

Context window: Gemini 3.1 Pro has 2 million tokens versus Opus 4.7’s 1 million. For workflows requiring full codebase ingestion or large legal archives in a single call, Gemini is the only practical option among these two.

Pricing: Gemini 3.1 Pro undercuts Opus 4.7 at $2 and $12 per million tokens for input and output respectively, compared to $5 and $25 for Opus 4.7. But Opus 4.7’s lead on the benchmarks that enterprise buyers prioritize, particularly SWE-bench and agentic reasoning, may justify the premium for customers whose workloads demand the highest capability. The Next Web

Multimodal: Gemini 3.1 Pro retains a clear edge in video understanding and audio processing, capabilities Opus 4.7 does not match.

What Opus 4.7 Does Not Do

This release has honest limitations that Anthropic acknowledges directly.

BrowseComp regression: The drop from 83.7 to 79.3 percent is real. If web research is central to your agentic pipeline, test before you migrate.

Terminal operations: GPT-5.4 leads on Terminal-Bench 2.0. Shell-heavy workflows have a better current home with OpenAI.

Context window: At 1 million tokens, Opus 4.7 handles most use cases. But it cannot compete with Gemini 3.1 Pro’s 2 million token ceiling for truly large-document workflows.

Mythos gap: Anthropic states directly that Opus 4.7 is less broadly capable compared to Mythos. The company’s testing showed Mythos is particularly good at exposing security vulnerabilities, and Opus 4.7 was deliberately trained to have reduced capabilities in that area, with safeguards that automatically detect and block requests that indicate prohibited or high-risk cybersecurity uses. 9to5Google

Costs may surprise on migration: The tokenizer can push spend 35 percent higher. This is the most underestimated detail in the launch.

Security and Safety Architecture

Anthropic stated it would keep Claude Mythos Preview’s release limited and test new cyber safeguards on less capable models first. Opus 4.7 is the first such model. Its cyber capabilities are not as advanced as those of Mythos Preview, and during training Anthropic experimented with efforts to differentially reduce these capabilities. Opus 4.7 ships with safeguards that automatically detect and block requests indicating prohibited or high-risk cybersecurity uses. Anthropic

Security professionals who wish to use Opus 4.7 for legitimate cybersecurity purposes, such as vulnerability research, penetration testing, and red-teaming, are invited to join Anthropic’s new Cyber Verification Program. Anthropic

This is a notable policy move. Anthropic is explicitly segmenting access to security capabilities by verified use case, a model more restrictive than competitors currently apply.

Migration Guide: What Breaks on Opus 4.7

Breaking API changes (will return 400 errors):

- Any call setting temperature, top_p, or top_k

- Extended thinking budgets via the old parameter structure

- Sampling parameters in general

Migration path: Switch to adaptive thinking, remove all sampling parameters, and use prompting to control output behavior instead.

Non-breaking but behavior-changing:

- Instructions are now followed literally, not loosely

- Response length adapts to task complexity rather than defaulting to fixed verbosity

- Fewer tool calls by default at any given effort level

- More regular progress updates during long agentic traces

- Fewer subagents spawned by default

For Claude Code users, Opus 4.7 is already the default model. The /ultrareview command is available. The model’s stricter instruction following and improved self-correction should translate to fewer rounds of back-and-forth on complex tasks. Fello AI

Before migrating production traffic: recalculate cost estimates using the 1.0 to 1.35x tokenizer multiplier, run existing prompt suites against Opus 4.7 in a staging environment, and adjust any prompts that relied on loose instruction interpretation.

Winner Pick: Which Model Should You Use?

For professional software engineering and agentic coding, Opus 4.7 is the clear leader in April 2026. The SWE-bench Pro gap over GPT-5.4 (64.3 vs 57.7 percent) and the CursorBench jump to 70 percent are not close margins. Cursor’s own 50 percent adoption incentive signals confidence in the practical coding improvement.

For legal, financial, and knowledge-work tasks requiring rigorous reasoning and document analysis, Opus 4.7 wins again. BigLaw Bench at 90.9 percent and the confirmed improvements in financial analysis (Hebbia, Hex partner reports) back this up with production data, not just synthetic benchmarks.

For vision-heavy workflows, document extraction, and UI automation, Opus 4.7’s 3x resolution increase and 79.5 percent visual navigation score make it competitive for the first time in this category. But if you need native video understanding or audio processing, Gemini 3.1 Pro is still the only practical option.

For agentic web research and browsing, GPT-5.4 remains the stronger choice at 89.3 percent on BrowseComp versus Opus 4.7’s 79.3 percent.

For cost-sensitive, high-volume production deployments, Gemini 3.1 Pro at $2/$12 per million tokens undercuts Opus 4.7 significantly. If your workload does not specifically require Opus-level coding or reasoning, the price gap is hard to justify.

For teams already running Opus 4.6 in production, the recommendation is to migrate new projects to Opus 4.7 immediately and schedule a controlled migration for existing production systems after re-benchmarking costs and re-testing prompts.

What Is Coming Next

The AI design tool that The Information reported on April 14 did not ship with Opus 4.7. Based on Anthropic’s own source code references (with caveats from Boris Cherny on reliability), the pipeline ahead includes Sonnet 4.8, something codenamed Capybara, and eventually a broad release of Mythos-class capability once the cyber safeguards tested on Opus 4.7 mature in production.

The unreleased Mythos Preview’s 77.8 percent on SWE-bench Pro versus Opus 4.7’s 64.3 percent shows Anthropic has substantial capability it is not yet shipping publicly. The gap between what is available and what is built is the most interesting story in Anthropic’s roadmap right now.