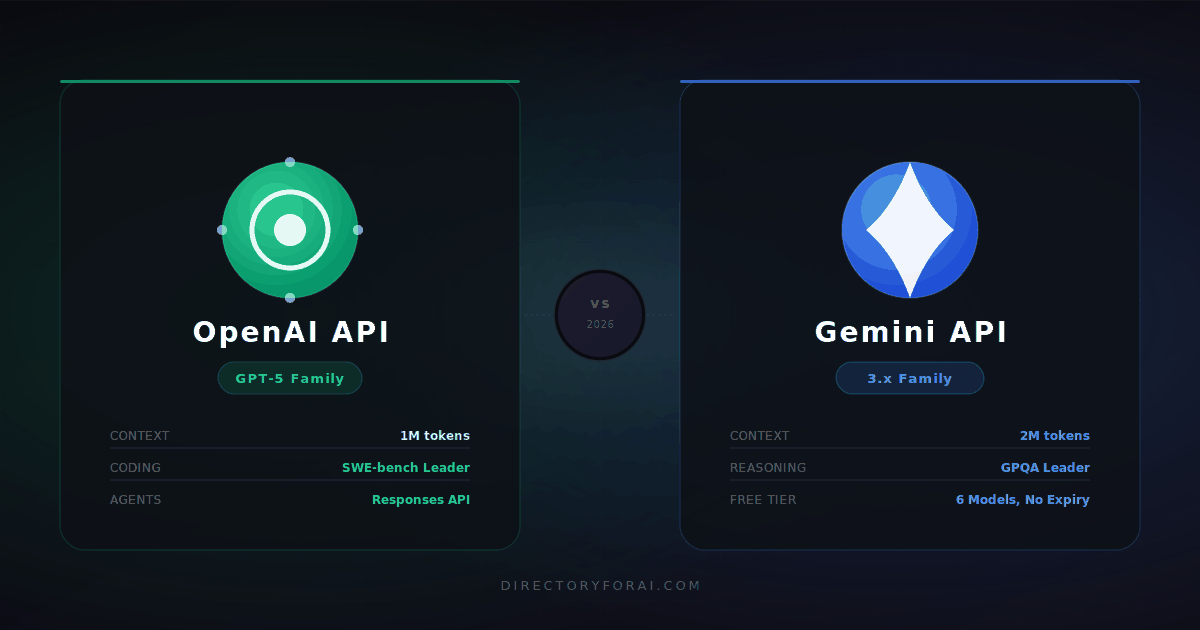

OpenAI API vs Gemini API

The AI API market looked very different two years ago. Developers picked between a handful of models, pricing was opaque, and “production-ready” meant GPT-4 with a prayer. Today, OpenAI and Google each maintain entire model families spanning nano-scale inference to frontier reasoning engines, with pricing tiers, batch discounts, context caching, and agent-native infrastructure layered on top. Choosing the wrong API at the architecture stage is no longer a cosmetic mistake. It is a cost, latency, and reliability problem that compounds at scale.

AI APIs have become core infrastructure. SaaS companies now embed language models in every product surface from onboarding flows to analytics pipelines. Startups structure their entire unit economics around token costs. Enterprise teams evaluate model providers with the same rigor as cloud vendors. In this environment, selecting between the OpenAI API and the Gemini API requires understanding not just which model scores better on a benchmark, but which platform’s pricing model, ecosystem, context architecture, and agent tooling best matches your workload and scale trajectory.

The wrong choice leads to predictable failure modes: output-heavy workloads running through the wrong model tier can spike monthly costs by 4x to 10x. Choosing a high-latency model for a real-time chat application degrades user experience measurably. Building agents on a platform without mature orchestration tooling adds months of engineering work. Picking an API with an immature ecosystem means hiring around gaps that your competitors already solved.

This guide covers both platforms as they exist in March 2026, with real pricing numbers, current benchmarks, architectural tradeoffs, and specific decision frameworks for the workload types developers, founders, and SaaS builders actually encounter. By the end, you will have a clear answer for your use case, not a generic list of features.

TL;DR: Which API Should You Choose?

Best for cost efficiency at scale: OpenAI. GPT-5 Nano at $0.05/$0.40 per million tokens and GPT-5 Mini at $0.25/$2.00 provide a cost cascade that lets you route cheap tasks cheaply. The Batch API cuts all costs by 50%. Prompt caching saves 90% on repeated context. The math works at volume.

Best for reasoning: Tied at the flagship level, OpenAI for coding-specific reasoning. GPT-5.4 scores 71.7% on SWE-bench Verified versus Gemini 3.1 Pro’s 63.8%. For pure scientific reasoning, Gemini 3.1 Pro leads GPQA Diamond at 94.3% versus GPT-5.4’s 92.8%. Both offer extended thinking modes.

Best for coding: OpenAI. The GPT-5.3 Codex model scored 77.3% on Terminal-Bench 2.0. GPT-5.4 resolves roughly 1 in 8 more real-world GitHub issues than Gemini in SWE-bench testing. Codex CLI is a category of its own.

Best for startups and MVPs: Gemini. Google’s free tier gives persistent access to six models including Gemini 2.5 Flash and 3 Flash Preview with no credit card required. For prototyping and early-stage products, that is a meaningful operational advantage.

Best for document-heavy and enterprise workflows: Gemini. A 2-million-token context window on Gemini 3.1 Pro (versus 1 million on GPT-5.4), native Google Workspace and Vertex AI integration, and tiered pricing that rewards long-context efficiency make Gemini the natural fit for document processing, legal analysis, and data-heavy enterprise pipelines.

Best for agent workflows and automation: OpenAI. The Responses API, Agents SDK, and built-in tools (web search, file search, computer use, code interpreter, remote MCP) represent a more mature, production-tested agentic infrastructure than anything currently available through the Gemini API.

Best multimodal stack: OpenAI for action-oriented applications, Gemini for understanding-heavy applications. GPT-5.4 adds native computer use and desktop automation. Gemini 3.1 Pro offers deeper native multimodal understanding across text, image, audio, and video.

OpenAI API vs Gemini API: Quick Comparison Table (March 2026)

| Feature | OpenAI (GPT-5 Family) | Google (Gemini 3.x / 2.5 Family) |

|---|---|---|

| Flagship model | GPT-5.4 | Gemini 3.1 Pro |

| Flagship input price (per 1M tokens) | $10.00 | $4.00 (above 200K context: higher) |

| Flagship output price (per 1M tokens) | $30.00 | $18.00 |

| Mid-tier model | GPT-5 ($1.25 input / $10.00 output) | Gemini 2.5 Pro ($1.25 input / $10.00 output) |

| Budget model | GPT-5 Nano ($0.05 input / $0.40 output) | Gemini 2.5 Flash-Lite ($0.10 input / $0.40 output) |

| Context window (flagship) | 1M tokens | 2M tokens |

| Max output tokens | 128K | 8K to 32K (varies by model) |

| Free tier | $5 credit (expires in 3 months) | Persistent free tier, 6 models, 1,000 req/day |

| Batch API discount | 50% off | 50% off |

| Prompt caching discount | Up to 90% off | Up to 90% off |

| Coding benchmark (SWE-bench Verified) | ~80% (GPT-5.2) / 71.7% (GPT-5.4) | 80.6% (Gemini 3.1 Pro) |

| Science reasoning (GPQA Diamond) | 92.8% (GPT-5.4) | 94.3% (Gemini 3.1 Pro) |

| Native computer use | Yes (GPT-5.4 via Responses API) | No |

| Agent framework | Responses API + Agents SDK (mature) | Google ADK (growing) |

| Fine-tuning | Available (multiple tiers) | Available via Vertex AI |

| Multimodal inputs | Text, image, audio, video | Text, image, audio, video |

| Native image generation | Yes (GPT Image via Responses API) | Yes (Imagen 3) |

| Ecosystem | OpenAI platform, Azure OpenAI, Codex CLI | Google Cloud, Vertex AI, AI Studio, Workspace |

| Primary advantage | Coding, agents, ecosystem maturity | Context length, scientific reasoning, Google integration |

Key insight from the table: The mid-tier pricing has converged almost exactly. Both GPT-5 and Gemini 2.5 Pro come in at $1.25/$10.00 per million tokens. The real differentiation sits at the extremes: OpenAI’s nano models are cheaper per token at the low end, Gemini’s Pro models offer more context per dollar at the high end, and the flagship tiers price differently in ways that only matter at specific scale points.

Model Lineup: OpenAI and Gemini

OpenAI Model Lineup

OpenAI’s 2026 model stack is organized around a cost-performance cascade that lets developers route tasks intelligently without touching the API format.

GPT-5 Nano ($0.05 input / $0.40 output per 1M tokens) is the workhorse for classification, routing, and simple extraction. At this price point, running 10 million input tokens costs 50 cents. It is designed for the tasks developers previously over-engineered: intent detection, basic summarization, entity tagging. These do not require frontier intelligence but do require volume throughput.

GPT-5 Mini ($0.25 input / $2.00 output per 1M tokens) occupies the practical middle ground for most SaaS applications. Capable enough for coherent multi-turn conversation, instruction following, and basic code assistance, cheap enough to run at meaningful scale without burning your runway.

GPT-5 ($1.25 input / $10.00 output per 1M tokens) is the general-purpose model for production applications requiring strong reasoning, long-form generation, and reliable instruction following. This is the model most teams standardize on before evaluating whether they need something more specialized. GPT-5 scores 74.9% on SWE-bench Verified and achieves 88% on Aider polyglot.

GPT-5.2 ($1.75 input / $14.00 output per 1M tokens) is the coding and professional-grade tier. It scored approximately 80% on SWE-bench Verified, meaning it resolves roughly 4 out of 5 real GitHub issues autonomously. It handles multi-file codebases, complex debugging chains, and long-form document analysis with higher consistency than GPT-5.

GPT-5.3 Codex is purpose-built for developer workflows. It scored 77.3% on Terminal-Bench 2.0, a benchmark measuring real command-line task navigation across operating systems, package managers, and build systems. It powers Codex CLI and is the strongest currently available model for autonomous terminal-based development.

GPT-5.4 ($10.00 input / $30.00 output per 1M tokens) is the frontier tier. It introduced native computer use via the Responses API, meaning the model can see a screen, click, type, and navigate desktop applications. No other provider currently matches this capability at the API level. Its 1M token context window, 128K max output, and 71.7% SWE-bench Verified score make it the most capable general-purpose model OpenAI has shipped.

O-series reasoning models (O3 Pro at $150/1M tokens) sit above the GPT-5 family for use cases requiring deep chain-of-thought, mathematical proofs, and research-grade analysis. These are not chatbot models. They are inference engines for problems that warrant the cost.

The Batch API applies a flat 50% discount to all models for asynchronous processing within a 24-hour window. Prompt caching offers up to 90% savings on repeated context. For most production workloads with any pattern repetition, effective per-token costs run significantly below the listed rates.

Gemini Model Lineup

Google’s model strategy in 2026 emphasizes breadth and tiering, with clear segmentation between speed-optimized, balanced, and reasoning-focused models.

Gemini 2.0 Flash-Lite ($0.075 input / $0.30 output per 1M tokens) is Google’s floor pricing and one of the cheapest commercial-grade models available from any major provider for text tasks. It is extremely fast, designed for real-time classification and routing, and pairs well with higher-tier models in a cost-routing architecture.

Gemini 2.5 Flash-Lite ($0.10 input / $0.40 output per 1M tokens) and Gemini 2.5 Flash ($0.30 input / $2.50 output per 1M tokens) represent Google’s practical middle tier. The Flash models include hybrid reasoning mode (“Thinking” mode at $3.50 per million output tokens for the 2.5 Flash) which provides enhanced reasoning on demand without forcing developers to use the full Pro model for every request.

Gemini 2.5 Pro ($1.25 input / $10.00 output per 1M tokens for contexts under 200K) is Google’s production flagship for most developer workloads. It delivers near-frontier performance at GPT-5 pricing. Critically, contexts over 200K tokens cost $2.50 input / $15.00 output, a tiered pricing structure that matters enormously for long-document applications.

Gemini 3 Flash ($0.50 input / $3.00 output per 1M tokens) is the first release in the Gemini 3 generation optimized for speed. It is available on the free tier, making it accessible for prototyping and early-stage applications without financial commitment.

Gemini 3 Pro ($2.00 input / $12.00 output per 1M tokens for standard context; $4.00/$18.00 for contexts over 200K) is Google’s mid-range frontier model. It features a 2M token context window, strong multimodal reasoning, and deep integration with the Google ecosystem.

Gemini 3.1 Pro is Google’s current flagship. It leads GPQA Diamond at 94.3%, scores 80.6% on SWE-bench Verified, and offers the largest context window among frontier models at 2 million tokens. This is the model for research-heavy, document-heavy, and scientifically demanding workloads.

Gemini 3.1 Flash provides the speed of Flash at the 3.1 generation level, the best option for high-throughput real-time applications that need current-generation quality without Pro model latency or cost.

Pricing: The Full Picture

Token pricing is only one variable. The effective cost of running either API at scale depends on the combination of model tier, context length, caching behavior, batch strategy, and output ratio. Getting this wrong is the most common way teams blow their AI budget.

How Token Pricing Actually Works

An input token is roughly 4 characters of English text, about three-quarters of a word. A 1,000-word prompt is approximately 1,333 tokens. Output tokens are the model’s response, billed separately and almost always at a higher rate than input tokens.

The input/output asymmetry exists because generating tokens is computationally more expensive than reading them. A model processes your input in parallel (fast) but generates output sequentially (slow, expensive). This asymmetry has major implications: output-heavy workloads like long-form content generation, detailed code output, and comprehensive analysis cost proportionally more than input-heavy workloads like document classification or retrieval augmentation.

For most chat applications, input tokens and output tokens appear in roughly a 3:1 to 5:1 ratio. For coding assistants with verbose output, that ratio can flip to 1:3 or worse. Understanding your specific ratio before choosing a model tier is essential.

Token Pricing Comparison Table (March 2026)

| Model | Input (per 1M) | Output (per 1M) | Context Window |

|---|---|---|---|

| GPT-5 Nano | $0.05 | $0.40 | 128K |

| GPT-5 Mini | $0.25 | $2.00 | 128K |

| GPT-5 | $1.25 | $10.00 | 128K |

| GPT-5.2 | $1.75 | $14.00 | 128K |

| GPT-5.4 | $10.00 | $30.00 | 1M |

| Gemini 2.0 Flash-Lite | $0.075 | $0.30 | 1M |

| Gemini 2.5 Flash-Lite | $0.10 | $0.40 | 1M |

| Gemini 2.5 Flash | $0.30 | $2.50 | 1M |

| Gemini 2.5 Pro (under 200K context) | $1.25 | $10.00 | 1M |

| Gemini 2.5 Pro (over 200K context) | $2.50 | $15.00 | 1M |

| Gemini 3 Flash | $0.50 | $3.00 | 1M |

| Gemini 3 Pro (under 200K context) | $2.00 | $12.00 | 2M |

| Gemini 3 Pro (over 200K context) | $4.00 | $18.00 | 2M |

| Gemini 3.1 Pro | ~$2.00 to $4.00 | ~$12.00 to $18.00 | 2M |

Sources: OpenAI API Pricing, Google Gemini API Pricing, Vertex AI Pricing

The convergence at the $1.25/$10.00 tier between GPT-5 and Gemini 2.5 Pro is not a coincidence. Competitive pricing pressure in 2025 pushed both providers toward the same equilibrium for mid-tier production models.

Free Tier and Trial Credits

OpenAI gives new API accounts $5 in free credits with no credit card required, expiring after 3 months. At GPT-5 Nano pricing ($0.05/$0.40), $5 processes roughly 10 million tokens. At GPT-5 Mini pricing, it covers approximately 3.3 million tokens. The free credit is a temporary onramp, not a persistent tier.

Gemini offers a genuinely persistent free tier through Google AI Studio: up to 1,000 requests per day, 5 to 15 requests per minute, and access to six models including Gemini 2.5 Flash, Gemini 2.5 Flash-Lite, Gemini 3 Flash Preview, Gemini 2.0 Flash, Gemini Embedding, and Gemma 3. This free tier has no expiration and requires no credit card setup. For indie developers building MVPs, small apps under light traffic, or anyone prototyping, Gemini’s free tier means you can run a functional application with zero cost until you hit rate limits.

The practical difference: an indie developer with 500 daily active users running a simple chatbot could run indefinitely on Gemini’s free tier, while the same load on OpenAI would require a paid account from day one.

Batch API Discounts (Often Overlooked)

Both providers offer 50% off for asynchronous batch processing, which completes within 24 hours. This is enormous for non-real-time workloads: content generation pipelines, document analysis queues, SEO content production, nightly analytics summarization, and any workflow where a 24-hour window is acceptable. A team paying $1.25/million tokens for GPT-5 drops to $0.625/million with the Batch API. On Gemini 2.5 Pro the same logic applies, dropping from $1.25 to $0.625/million.

The Batch API is consistently underused by teams that build everything as synchronous API calls by default. If 40% of your workload is non-real-time, the Batch API effectively reduces your total monthly bill by 20%.

Prompt Caching

Both APIs offer context caching, where frequently repeated content (system prompts, document context, conversation history boilerplate) is cached and re-read at approximately 10% of the standard input price. The practical savings are substantial for applications with large, stable system prompts or repeated document context.

An application sending a 50,000-token system context on every request at GPT-5 pricing spends $0.0625 per call on that context alone. With 90% caching, that drops to $0.00625. At 10,000 daily requests, uncached context costs $625/day versus $62.50 cached, a $562.50 daily difference from a single optimization.

Real-World Cost Scenarios

Scenario 1: Small app running 100K tokens/month

This is a side project or very early-stage product with minimal traffic.

| Model | Input Cost (70K tokens) | Output Cost (30K tokens) | Monthly Total |

|---|---|---|---|

| GPT-5 Nano | $0.0035 | $0.012 | $0.016 |

| GPT-5 Mini | $0.018 | $0.060 | $0.078 |

| Gemini 2.5 Flash-Lite | $0.007 | $0.012 | $0.019 |

| Gemini 2.5 Flash | $0.021 | $0.075 | $0.096 |

At this scale, cost is irrelevant. Both APIs cost under $1/month. The decision should be based entirely on quality, developer experience, and free tier availability.

Scenario 2: Growing SaaS running 10M tokens/month

A product with real users generating meaningful API traffic (assume 70% input, 30% output ratio).

| Model | Input Cost (7M tokens) | Output Cost (3M tokens) | Monthly Total |

|---|---|---|---|

| GPT-5 | $8.75 | $30.00 | $38.75 |

| GPT-5.2 | $12.25 | $42.00 | $54.25 |

| Gemini 2.5 Pro | $8.75 | $30.00 | $38.75 |

| Gemini 2.5 Flash | $2.10 | $7.50 | $9.60 |

| GPT-5 Mini | $1.75 | $6.00 | $7.75 |

At this tier, the GPT-5/Gemini 2.5 Pro parity is real. The question is whether you need Pro-level quality or whether a mini/flash tier satisfies your quality bar, because dropping one tier saves 80% on costs.

Scenario 3: Large-scale product running 100M tokens/month

This is a funded product or enterprise deployment with significant daily traffic (assume 60% input, 40% output, skewed toward output because of detailed responses).

| Model | Input Cost (60M tokens) | Output Cost (40M tokens) | Monthly Total |

|---|---|---|---|

| GPT-5 | $75.00 | $400.00 | $475.00 |

| GPT-5.2 | $105.00 | $560.00 | $665.00 |

| Gemini 2.5 Pro | $75.00 | $400.00 | $475.00 |

| Gemini 2.5 Flash | $18.00 | $100.00 | $118.00 |

| GPT-5 Mini | $15.00 | $80.00 | $95.00 |

With Batch API (50% off for async portions, assuming 40% of traffic is non-real-time):

- GPT-5 effective cost: ~$380/month

- Gemini 2.5 Pro effective cost: ~$380/month

- GPT-5 Mini: ~$76/month

- Gemini 2.5 Flash: ~$94/month

The output token multiple is what kills costs at scale. An output-heavy workload on GPT-5.4 at $30.00/million would cost $1,200/month just for output at 40M output tokens. The same workload on Gemini 2.5 Flash costs $100.

Hidden Costs Most Teams Miss

Multi-turn conversation context accumulation: Every message in a conversation that you include in subsequent calls adds to input token count. A 10-turn conversation where each response averages 500 tokens costs 5,000 extra input tokens by turn 10. At scale across many concurrent users, this compounds. Teams that include full conversation history without pruning pay 2x to 5x more than teams that truncate to recent context or use summary compression.

Long context surcharges on Gemini: Both Gemini 2.5 Pro and Gemini 3 Pro double their input pricing for contexts over 200K tokens. A 250,000-token prompt costs twice as much per input token as a 150,000-token prompt on these models. This is a 100% price jump at the context boundary that catches teams processing large documents by surprise.

Audio input surcharges: Gemini’s audio input costs 2x to 7x more than text input depending on the model tier. An application with voice inputs that processes significant audio data will see costs that diverge sharply from pure-text estimates.

Retry costs from rate limit hits: If your application hits rate limits and retries without exponential backoff, failed calls that generated partial outputs may still bill tokens. Well-implemented retry logic and rate limit monitoring are operational requirements, not nice-to-haves.

Thinking mode costs on Gemini Flash: Gemini 2.5 Flash’s Thinking mode is priced at $3.50/million output tokens for thinking tokens, versus $2.50/million for regular output. If you enable Thinking mode globally rather than selectively, you inflate costs on requests that do not benefit from extended reasoning.

Pricing Verdict

Gemini wins on free-tier access and ultra-budget prototyping. OpenAI wins on cost efficiency through model tiering and prompt optimization at scale. At the mid-tier ($1.25/$10.00), both are identical. The real cost advantage of each platform emerges through architecture: OpenAI’s nano model cascade enables aggressive cost routing, and Gemini’s free tier and batch discounts reward non-time-sensitive workloads.

Performance and Benchmarks

Benchmarks are proxies, not guarantees. A model that scores 94% on GPQA Diamond and 80% on SWE-bench may produce outputs that fail your specific evaluation criteria. Treat the numbers below as directional signals, then test on your actual data.

Reasoning

On GPQA Diamond, the most rigorous publicly available reasoning benchmark covering graduate-level scientific questions, Gemini 3.1 Pro leads at 94.3% versus GPT-5.4 at 92.8%. At GPT-5.4 Pro tier, OpenAI closes this gap to 94.4%, effectively identical.

For multi-step logical reasoning in complex, ambiguous domains, GPT-5.2 and its successors maintain an edge in consistency. OpenAI’s internal evaluations show GPT-5.2 winning or tying in over 70% of knowledge tasks across 44 occupational domains. Gemini 3 Pro’s “Deep Think” variant rivals or exceeds GPT-5.2 on pure logic benchmarks, but at a significant latency and cost premium.

The practical takeaway: for most reasoning workloads, both platforms perform at a level that is indistinguishable in production. The differences surface in edge cases: handling highly ambiguous instructions, maintaining chain-of-thought across many reasoning steps, and producing explanations that are both accurate and consistently structured.

Coding

GPT-5.4 scores 71.7% on SWE-bench Verified versus Gemini 3.1 Pro’s 63.8%. This 8-point gap means OpenAI resolves roughly 1 in 8 more real-world GitHub issues correctly. On SWE-bench Verified at the GPT-5.2 level, the comparison tightens. GPT-5.2 at approximately 80% versus Gemini 3.1 Pro’s 80.6% is a statistical tie.

On Terminal-Bench 2.0, which measures real terminal workflow navigation, GPT-5.3 Codex leads at 77.3%. Google has no direct equivalent to Codex CLI, which combines model capability with autonomous terminal operation as a first-party product.

Where Gemini competes: for understanding large codebases end to end, the 2M token context window allows ingesting approximately 15,000 lines of code in a single prompt versus GPT-5.4’s 1M context at roughly 7,500 lines. For codebase-level understanding tasks like audit reviews, security scanning, and cross-file refactoring analysis, Gemini’s context advantage translates directly to fewer chunking workarounds.

Gemini Code Assist provides free coding assistance in VS Code and JetBrains backed by Gemini 2.5 Pro, making it a strong alternative for cost-conscious developers.

Writing Quality

Both models produce coherent, structured long-form content. OpenAI maintains stronger tone consistency across extended outputs, while Gemini shows slightly more stylistic variance in long-form generation. For marketing copy, blog articles, and structured SEO content, either platform is viable. The difference is not in raw quality but in predictability. If your application has strict brand voice requirements, OpenAI’s instruction-following precision may reduce post-processing work.

Hallucination and Accuracy

GPT-5.2 introduced improved safeguards and reality consistency layers per OpenAI’s release notes, reducing measurable hallucination rates versus earlier model generations. Gemini 3 Pro’s alignment layer is described as thinner, which some users report produces less filtered and more direct responses, but at the cost of occasionally greater hallucination risk in edge cases.

For high-stakes accuracy requirements in medical, legal, and financial applications, prompt verification layers and output validation are necessary regardless of which model you use. Neither platform’s base models should be treated as ground truth without cross-referencing.

Latency

Gemini 3.1 Flash-Lite delivers sub-200 millisecond response latency for typical queries, making it the fastest production model available at this generation. GPT-5.4 cut latency approximately 18% compared to GPT-5, improving interactive flow. For real-time voice assistants and synchronous user-facing interfaces, Gemini Flash-Lite is the stronger default. For coding tools and agent workflows where a few hundred milliseconds of additional latency is acceptable, OpenAI’s models match developer expectations.

Structured Output and JSON Mode Reliability

One of the persistent developer pain points across both platforms is JSON mode reliability: the ability to guarantee that the model produces valid, schema-conforming structured output without parsing failures. OpenAI’s structured outputs feature, available in the Responses API with strict JSON schema enforcement, produces more reliable machine-readable output for production workflows. Gemini supports structured output but has shown more variability in schema adherence across complex nested structures. For data extraction pipelines and classification systems where output parsing is automated, this difference has real operational cost.

Context Length and Long Document Handling

Context length is the most misunderstood feature in AI API comparisons. Having a large context window is necessary but not sufficient. What matters is what the model does with it, how much it costs to fill it, and whether your application actually benefits from large context or would be better served by RAG.

What Context Window Actually Means for Your Application

A token is approximately 4 characters of English text. A 1M token context window fits roughly 750,000 words, the equivalent of about three standard novels. Gemini 3.1 Pro’s 2M token context window fits approximately 1.5 million words, roughly a small encyclopedia volume.

In practical terms: a 100-page legal contract is approximately 40,000 to 60,000 tokens. A full codebase with 500 files might run 500,000 to 2,000,000 tokens depending on file sizes. An hour of meeting transcript is approximately 10,000 to 15,000 tokens. A multi-month conversation history with dozens of turns could accumulate 50,000 to 200,000 tokens.

For most SaaS applications (chatbots, content tools, customer support systems), the real-world context needed per conversation rarely exceeds 30,000 to 50,000 tokens. Neither GPT-5’s 128K context nor Gemini 2.5 Flash’s 1M context is the binding constraint for these workloads. Where context size becomes critical is in document processing, codebase analysis, legal review, and enterprise data workflows.

Context Window Comparison Table

| Model | Context Window | Max Output | Long Context Pricing |

|---|---|---|---|

| GPT-5 / GPT-5.2 | 128K | 16K to 32K | No tiered surcharge |

| GPT-5.4 | 1M | 128K | No tiered surcharge |

| Gemini 2.5 Flash | 1M | 8K | No tiered surcharge |

| Gemini 2.5 Pro | 1M | 8K | 2x pricing above 200K |

| Gemini 3 Pro | 2M | 8K | 2x pricing above 200K |

| Gemini 3.1 Pro | 2M | 8K to 32K | 2x pricing above 200K |

The max output token difference deserves attention. GPT-5.4 supports 128K output tokens, meaning it can generate an extremely long, coherent single response. Most Gemini models cap at 8K output tokens. For applications that require generating very long documents, detailed reports, or extensive code files in a single API call, this is a structural limitation on Gemini that forces splitting into multiple calls.

Real-World Context Usage Scenarios

Processing Large PDFs: A 200-page legal document is approximately 80,000 to 120,000 tokens. Both Gemini 2.5 Pro and GPT-5.4 can process this without chunking. For documents exceeding 200K tokens (multi-contract reviews, entire case files, large research compilations), Gemini handles the full document but Gemini Pro models trigger a pricing tier jump at that 200K boundary. GPT-5.4 at 1M tokens handles extremely long documents without any tiered surcharge.

Multi-Turn Conversations: At 50 turns with 300 tokens average per response, conversation history grows to 15,000 tokens. At 200 turns, it reaches 60,000 tokens. For very long conversations, history management strategies (summarization, truncation to recent N turns) matter more than raw context size, because the cost of sending a 100,000-token history on every call scales linearly with turn count. A naive implementation that includes full conversation history at GPT-5 pricing spends $1.25/million on every history token every call. A 10,000-turn conversation with a team of users can accumulate thousands of dollars in pure history token cost.

RAG vs. Long Context: For knowledge base applications, the question is whether to embed documents and retrieve relevant chunks (RAG) or to include the full document collection in context (long-context ingestion). Long context is simpler to implement and avoids retrieval errors but costs more per query. RAG requires an embedding pipeline but reduces per-query token cost dramatically for large knowledge bases. Gemini’s 2M token window reduces but does not eliminate the need for RAG. For a knowledge base exceeding 2M tokens, retrieval is still necessary. For knowledge bases under 500K tokens queried many times, long-context injection may be cost-competitive with RAG after accounting for embedding infrastructure.

The 200K Pricing Cliff on Gemini

Gemini 2.5 Pro and Gemini 3 Pro charge 2x the input price for contexts exceeding 200K tokens. This means a 250,000-token prompt on Gemini 2.5 Pro costs $2.50/million input tokens instead of $1.25/million, the same as or more expensive than GPT-5.4 for large inputs. Teams that build their architecture assuming Gemini’s large context window is always cost-competitive are surprised when long-document processing costs exceed GPT-5.4 estimates.

GPT-5.4 has no tiered pricing surcharge. One million tokens costs the same per-token rate as 10,000 tokens. For truly large context workloads above 200K tokens, the cost comparison reverses and OpenAI becomes competitive even at its higher headline rate.

When Context Size Is a Real Advantage

Gemini’s 2M token window is a genuine, meaningful advantage for processing entire legal case files (frequently 300K to 1M+ tokens), full codebase security audits without chunking, analyzing entire book manuscripts or multi-document research corpora, and running long-horizon agentic tasks where all context must be maintained in-window.

For these workloads, the engineering simplicity of eliminating chunking logic is worth the cost premium. Chunking introduces edge cases (content split at important boundaries), retrieval errors, and significant implementation overhead. When Gemini’s context window eliminates chunking entirely, it reduces complexity and failure modes.

Developer Experience

Developer experience is a long-tail cost. A harder API adds days of setup, weeks of debugging, and ongoing maintenance overhead. The best model in the world is not worth choosing if your team spends an extra month building around its quirks.

Documentation Quality

OpenAI maintains the most comprehensive and developer-focused API documentation in the industry, with extensive code examples across Python, JavaScript, and cURL, detailed guides for every feature, and an active changelog. The documentation is structured around task completion (how to build agents, how to implement fine-tuning, how to reduce costs) rather than just API reference, which significantly reduces onboarding time.

Google’s Gemini API documentation through AI Studio is strong and has improved considerably through 2025, with interactive playground access, clear SDK references, and Vertex AI integration guides. However, the documentation is split across multiple surfaces: AI Studio, Google Cloud docs, and Vertex AI docs. This creates navigation friction for developers unfamiliar with Google’s ecosystem structure.

SDKs and Language Support

Both providers offer Python and JavaScript/TypeScript SDKs as first-class citizens. OpenAI’s Python SDK is among the most widely used third-party libraries in the Python ecosystem, with extensive community extensions, LangChain and LlamaIndex integration, and native support in most AI application frameworks. The SDK API format has become an industry standard: many providers including Groq, Together AI, and others implement OpenAI-compatible APIs, meaning code written against the OpenAI SDK often works against alternatives with a one-line URL change.

Google’s Gemini SDK quality has improved significantly but the ecosystem of third-party integrations defaults to OpenAI compatibility first. If your application stack uses LangChain, LlamaIndex, DSPy, or similar frameworks, OpenAI typically works out of the box while Gemini may require additional configuration or community-maintained adapters.

API Design

OpenAI’s Responses API, which replaced the Chat Completions API as the recommended integration path for new projects, provides a unified interface for agentic workflows. It combines the simplicity of chat completions with native tool integration, stateful context management, and multi-turn persistence. OpenAI has committed to a feature parity timeline with the older Assistants API before sunsetting it in August 2026, meaning the Responses API is the durable path forward.

The Responses API design is intuitive: it accepts flexible inputs, supports store: true for stateful sessions, provides previous_response_id for easy chaining without managing full conversation history manually, and returns structured items for both text and tool calls. Internal OpenAI testing shows 40% to 80% better cache utilization versus Chat Completions, which translates directly to lower effective costs.

Gemini’s API design is clean and well-structured, with strong support for multimodal inputs across text, image, audio, and video in a unified message format. The Google ADK (Agent Development Kit) provides agent orchestration capabilities, though it is newer and less battle-tested than OpenAI’s Agents SDK.

Rate Limits

OpenAI organizes rate limits into usage tiers (1 through 5) that unlock progressively higher limits as you spend more on the platform. New accounts start at Tier 1 with conservative limits and can request tier upgrades after meeting spending thresholds. For startups scaling quickly, hitting rate limits before approval for a tier upgrade is a real operational friction point. Production incidents caused by API rate limiting are common during growth phases.

Google’s Gemini free tier is limited to 5 to 15 requests per minute and 1,000 requests per day. Paid tiers provide substantially higher limits but require Google Cloud project setup and billing configuration. For teams already on Google Cloud, this is seamless. For teams starting fresh, the setup overhead is higher than OpenAI’s account-based billing.

Reliability and Uptime

OpenAI’s API has a history of intermittent reliability issues during high-demand periods, partially reflecting its role as infrastructure for a massive fraction of the global AI application ecosystem. Google’s infrastructure, built on the same underlying network as Google Cloud and its consumer products, has delivered more consistent uptime metrics but Gemini-specific API stability has occasionally lagged as the platform scales.

Both providers warrant fallback strategy planning for production systems. LiteLLM as a unified gateway allows routing across providers and automatically failing over to the other API when one experiences degradation, a pattern increasingly common among mature AI-powered products.

Fine-Tuning

OpenAI fine-tuning is available for multiple model tiers including GPT-4.1 Nano and selected GPT-5 series variants. Fine-tuned models deliver better performance on domain-specific tasks, reduce prompt verbosity (and therefore cost), and produce more consistent structured outputs. The fine-tuning process is well-documented with clear pricing and manageable minimum dataset requirements.

Gemini fine-tuning is available through Vertex AI with access to Gemini 1.5 Flash and some 2.x variants. The Vertex AI setup requirement adds complexity for teams not already on Google Cloud, but for enterprise Google Cloud customers, the integration is seamless and the fine-tuning pipeline plugs directly into existing MLOps infrastructure.

For teams seriously considering fine-tuning, OpenAI’s path is more accessible. For enterprise teams on Google Cloud, Vertex AI fine-tuning is a natural extension of existing infrastructure.

Ecosystem and Tooling

OpenAI’s ecosystem is the most mature in the industry by a significant margin. Codex CLI enables autonomous terminal-based coding. The Responses API built-in tools (web search, file search, computer use, code interpreter) are production-ready and well-integrated. Azure OpenAI Service extends the platform with enterprise data governance, compliance controls, and regional deployment options that satisfy the requirements of financial services, healthcare, and government organizations.

Google’s ecosystem advantage is concentrated in its own product stack. Teams building on Google Cloud get native BigQuery integration, seamless Workspace connectivity, and Vertex AI’s MLOps tooling. For Google-centric organizations, this integration eliminates entire categories of plumbing work. For teams outside the Google ecosystem, these integrations are irrelevant.

Tool Use, Function Calling, and Agents

The shift from “language model” to “agent infrastructure” is the most significant architectural development of 2025 and 2026. Both APIs now provide first-class primitives for building autonomous systems, but their implementations reflect different philosophies about what agents should look like.

OpenAI’s Responses API and Agents SDK

OpenAI’s Responses API is the new standard for agent development. It is designed from the ground up as an agentic loop: a single API call can initiate multiple internal tool invocations across web search, file retrieval, code execution, computer interaction, and custom function calls, all within a single request that resolves when the task is complete.

Key capabilities in the Responses API:

- Built-in web search with structured citation output

- File search across uploaded documents and vector stores

- Computer use (GPT-5.4) for desktop application automation via screenshot-based interaction

- Code interpreter for running Python in a sandboxed environment

- Remote MCP server integration for connecting to external services via the Model Context Protocol

- Stateful sessions with

store: trueandprevious_response_idchaining - Encrypted reasoning for preserving thinking context without storing raw reasoning

Internal OpenAI tests show the Responses API delivers a 3% SWE-bench improvement over Chat Completions with the same prompt and model, due to better context handling. The cache utilization improvement of 40% to 80% versus Chat Completions reduces effective costs for most agent workloads.

The open-source Agents SDK handles multi-agent orchestration: routing tasks between specialized sub-agents, managing handoffs, implementing guardrails, and providing trace visibility into agent decision chains. This SDK has been production-deployed by multiple organizations and is actively maintained.

The Assistants API is being sunset in August 2026. Teams using it should migrate to the Responses API, which provides all the same capabilities with better performance and lower costs.

Gemini’s Agent Capabilities

Gemini’s function calling and tool integration have matured significantly. The Google ADK provides an orchestration framework for multi-agent systems, with native integration into Google Search (grounded responses), Google Workspace (reading and writing documents, calendar, email), BigQuery (data queries), and Vertex AI pipelines.

The grounding with Google Search capability is a meaningful differentiator: Gemini can access real-time web information as a native tool call without additional integration overhead, charged per search query rather than as token cost. For agents that require current information (news monitoring, market research, real-time data analysis), Google’s native search integration reduces latency and implementation complexity.

Where Gemini agents have an inherent advantage: long-context agent workflows where the agent must maintain large amounts of state across many reasoning steps. A 2M token context window means less need for external memory management, as the entire relevant history can fit in-context rather than requiring retrieval.

Reliability in Production Agent Systems

Multi-step agents fail in characteristic ways: a single tool call failure mid-chain causes the entire workflow to fail, error messages are propagated incorrectly, or intermediate outputs are malformed in ways that cascade. OpenAI’s more mature agent infrastructure (better error message clarity, more extensive community debugging experience, and more documented failure modes) gives it a reliability advantage in production agent deployments.

Gemini’s agent tooling is capable but has a smaller production-deployment knowledge base. Teams hitting edge cases with Google ADK have less community documentation to draw from and fewer third-party observability integrations.

Agent Use Case Decision Framework

| Use Case | Better Platform | Reason |

|---|---|---|

| Customer support automation | OpenAI | Lower latency, mature function calling, reliability track record |

| Document extraction pipelines | Gemini | Long context eliminates chunking; native document ingestion |

| Real-time data analysis agents | Gemini | Native Google Search grounding; BigQuery integration |

| Autonomous coding agents | OpenAI | Codex CLI, SWE-bench performance, computer use |

| CRM and business workflow automation | OpenAI | Azure OpenAI enterprise integration; MCP connectors |

| Research synthesis | Gemini | 2M context; scientific reasoning benchmark leadership |

Multimodal Capabilities

Both APIs handle text, image, audio, and video input. The meaningful differentiation is in output modalities and the depth of multimodal reasoning.

Image Input and Understanding

Both GPT-5 family and Gemini 3.x process images natively. Gemini’s multimodal training is more deeply integrated: the model was designed as a multimodal system from the foundation rather than having vision capabilities added to a text model. This shows in tasks that require reasoning across text and images simultaneously, such as analyzing charts with accompanying text, processing UI screenshots with overlaid labels, or interpreting diagrams in technical documentation.

OpenAI’s image understanding is production-ready and reliable, with better consistency in following structured extraction instructions from image inputs. For applications where image interpretation must produce structured, machine-readable output (form extraction, table parsing, product catalog processing), OpenAI’s instruction-following precision has an edge.

Image Generation

OpenAI’s GPT Image models (via the Responses API) produce high-fidelity images with strong instruction following, better consistency in preserving details like faces and logos, and integration into multi-turn image editing workflows. GPT Image 1.5 represents the current state of the art for instruction-following image generation.

Gemini’s Imagen 3 offers competitive image generation quality with strong integration into Google Cloud’s content management and distribution infrastructure. For enterprise applications requiring image generation within a Google Cloud workflow, Imagen 3 is the natural choice. For applications requiring the most detailed instruction-following in generative image editing, GPT Image 1.5 performs better.

Audio

OpenAI’s audio stack includes both speech-to-text (Whisper-based transcription) and text-to-speech generation, with the Realtime API now GA providing low-latency bidirectional audio streaming for production voice assistants. This is a mature, production-tested audio pipeline.

Gemini’s audio capabilities are growing. Gemini 2.5 Flash includes text-to-speech at $0.50 input / $10.00 audio output per million tokens. Audio input carries a 2x to 7x surcharge depending on model tier. For pure voice assistant applications, OpenAI’s Realtime API currently provides better latency and ecosystem maturity.

Video

Gemini’s native video understanding is a clear differentiator. Gemini 3.1 Pro can analyze hours of video content natively: understanding temporal sequences, explaining procedures shown in video, and synthesizing audio and visual information from recordings. OpenAI handles video through frame extraction rather than native video understanding, which is less effective for temporally complex video reasoning.

For applications involving video (tutorial processing, surveillance analysis, sports analysis, or educational content creation), Gemini’s native video capability represents a structural advantage that is not replicable on OpenAI without significant engineering overhead.

Use Case-Based Matchup

SaaS Applications

What it requires: Low per-request cost, predictable pricing, scalable throughput, reliable uptime, fast response times.

OpenAI advantage: The model tier cascade (Nano to Mini to standard to flagship) allows precise cost matching to task complexity. A SaaS product with diverse request types can route simple intent classification to GPT-5 Nano at $0.05/million, conversational responses to GPT-5 Mini at $0.25/million, and complex analysis to GPT-5 at $1.25/million. The Batch API cuts non-real-time workloads 50%. This granular routing can reduce total API spend by 60% to 80% versus using a single mid-tier model for everything.

Gemini advantage: The free tier allows early-stage SaaS products to run without API cost until they hit rate limits. Flash-Lite at $0.075/million input is the cheapest production-grade model available.

Winner: OpenAI, for the model routing architecture and predictable scaling behavior.

Chatbots (Customer Support, AI Assistants)

What it requires: Sub-second response times, coherent multi-turn context, reliable persona consistency, cost per session that scales with user volume.

OpenAI advantage: Response times, prompt caching on system prompts (up to 90% savings on repeated context), and the Responses API’s stateful session management. For a support chatbot handling 10,000 daily users, the combination of GPT-5 Mini pricing and system prompt caching typically lands at $0.01 to $0.03 per user session.

Gemini advantage: Longer context window supports conversations with extensive history without truncation. Free tier allows early testing with real users.

Winner: OpenAI for production deployments. Gemini Flash for budget-sensitive high-volume deployments.

Coding Assistants

What it requires: Accurate code generation, reliable debugging, multi-file context awareness, instruction following precision.

OpenAI advantage: GPT-5.3 Codex and GPT-5.4 lead on SWE-bench benchmarks. Codex CLI provides autonomous coding in terminal environments. Computer use enables agents to run code, observe output, and iterate, a complete coding loop that Gemini cannot currently match.

Gemini advantage: Gemini Code Assist is free and runs on Gemini 2.5 Pro in VS Code and JetBrains. For understanding entire large codebases in context, the 2M token window handles roughly 15,000 lines of code versus GPT-5.4’s roughly 7,500.

Winner: OpenAI for serious production coding tools. Gemini for free individual developer tooling and large codebase analysis.

Content Generation (SEO, Blogs, Marketing)

What it requires: Structural coherence, tone consistency, avoidance of hallucination, cost-effective at scale.

Both platforms produce high-quality long-form content. OpenAI maintains slightly better tone consistency across extended outputs and better adherence to style instructions. Gemini produces competitive quality with more stylistic variance. For high-volume content production (50 to 100 articles per day), Gemini’s Batch API at 50% discount on Flash models provides the most cost-effective path.

Winner: OpenAI for quality and consistency. Gemini Flash for volume and cost efficiency.

AI Agents and Automation

What it requires: Reliable function calling, multi-step reasoning, external API integration, error handling, observability.

OpenAI’s Responses API is the more mature platform for production agents. Built-in tools cover most common agent requirements (web search, code execution, file retrieval, computer use). The Agents SDK handles multi-agent orchestration with handoffs, guardrails, and tracing. Community documentation, third-party observability tools (Langfuse, Braintrust, Honeyhive), and a larger existing deployment base mean debugging is faster.

Winner: OpenAI decisively for production agent systems.

Document Processing and Enterprise Workflows

What it requires: Large document ingestion, accurate extraction, summarization, classification at document scale.

Gemini 3.1 Pro’s 2M token context window processes entire legal contracts, full research papers, or multi-document corpora without chunking. Eliminating chunking removes a category of engineering complexity and failure modes. Google Cloud integration means processed documents can flow directly into BigQuery, Drive, and enterprise data pipelines without additional connectors.

Winner: Gemini for document-heavy enterprise workflows.

Summary Table

| Use Case | Winner | Reason |

|---|---|---|

| SaaS applications | OpenAI | Model tier routing, cost efficiency, reliability |

| Customer support chatbots | OpenAI | Latency, caching, stateful sessions |

| Coding assistants | OpenAI (production), Gemini (free/large codebase) | SWE-bench, Codex CLI; Code Assist is free |

| Content generation (quality) | OpenAI | Tone consistency |

| Content generation (volume/cost) | Gemini | Flash Batch pricing |

| AI agents and automation | OpenAI | Responses API, Agents SDK maturity |

| Document processing | Gemini | 2M context, no chunking needed |

| Enterprise workflows | Gemini (Google stack) / OpenAI (Azure) | Ecosystem match |

| Video understanding | Gemini | Native video, no competitor |

| Voice assistants | OpenAI | Realtime API, lower latency |

Vendor Lock-In and Switching Costs

This is one of the most important considerations for long-term platform decisions, and it is almost never discussed adequately in feature comparisons.

OpenAI Format as Industry Standard

OpenAI’s Chat Completions API format has become the de facto standard that much of the industry has converged on. Providers including Groq, Together AI, Fireworks AI, Perplexity, and many others implement OpenAI-compatible APIs, meaning code written against OpenAI often works against alternatives by changing a single base URL. This is a significant hedge against lock-in. OpenAI compatibility is now an interoperability layer.

The Responses API is newer and less widely replicated, but its successor position within OpenAI’s ecosystem means long-term investment in it is well-placed.

Gemini Ecosystem Depth

Google’s lock-in operates through ecosystem integration rather than API format. If you build on Vertex AI, use Google Search grounding, integrate with BigQuery, and deploy through Google Cloud, switching away from Gemini means re-engineering all of those integrations. This is significant for organizations already on GCP, where platform advantages become the reason to stay.

For teams not on Google Cloud, Gemini integration is more portable. The Gemini API format is accessible independently of Google Cloud, and the SDK works without Vertex AI.

Abstraction Layers as a Defense

The practical answer for most teams is abstraction. LiteLLM provides a unified gateway that presents a single OpenAI-compatible interface in front of both OpenAI and Gemini (and Claude, Groq, and others), enabling model switching with configuration changes rather than code changes. This pattern (often called multi-provider routing) is increasingly standard for mature AI applications because it reduces lock-in to any single provider, enables cost-based routing (send simple queries to Gemini Flash-Lite, complex reasoning to GPT-5.2), provides automatic failover when one provider has an outage, and enables A/B testing between models without re-deploying application code.

Teams that build directly against provider SDKs without an abstraction layer are making a bet on their chosen provider’s stability, pricing, and continued availability. Given the pace of change in this market, that bet carries real risk.

Safety, Alignment, and Enterprise Readiness

OpenAI Safety Approach

OpenAI’s safety approach balances usability and restriction. The models are capable and directive-following, with moderation systems that block clearly harmful outputs while permitting a wide range of legitimate use cases. This makes OpenAI the default choice for most SaaS and startup applications where developer control over acceptable use is important but strict content filtering would impair the product.

Azure OpenAI Service, which provides access to the same models through Microsoft’s cloud infrastructure, adds enterprise-grade compliance controls: SOC 2 certification, HIPAA Business Associate Agreement eligibility, data processing agreements compliant with GDPR and other regional frameworks, and isolated deployment options for organizations requiring data residency guarantees.

Gemini Safety Approach

Google’s alignment layer for Gemini is historically more conservative than OpenAI’s, reflecting Google’s enterprise positioning and the reputational consequences of AI failures at Google’s scale. Some developers note that Gemini’s outputs feel more filtered, which reduces flexibility in creative and edge-case applications but increases predictability for regulated use cases.

Vertex AI provides enterprise compliance controls equivalent to Azure OpenAI (SOC 2, HIPAA, GDPR, regional processing options) within Google Cloud’s existing enterprise security framework. For organizations already on GCP with established compliance infrastructure, Gemini via Vertex AI adds compliance capabilities without requiring new vendor relationships.

Industry-Specific Considerations

Healthcare: Both Azure OpenAI (via Microsoft HIPAA BAA) and Vertex AI Gemini (via Google Cloud HIPAA BAA) support healthcare deployments. The choice follows existing cloud vendor relationships.

Finance: Both platforms support the compliance requirements of financial services organizations. OpenAI’s broader adoption means more existing implementations to reference. Gemini’s BigQuery integration is a meaningful operational advantage for firms whose data already lives in Google Cloud.

Legal: Gemini’s long-context advantage for processing legal documents, combined with Vertex AI’s compliance posture, makes it well-suited for legal technology applications. OpenAI’s stronger instruction-following makes it better for structured legal extraction tasks.

Pros and Cons

OpenAI API Pros

Ecosystem maturity: The widest adoption, the largest community, the most third-party integrations, and the most documented failure modes. Hiring engineers who know the OpenAI API is easier than hiring those who know Gemini. Onboarding new developers takes less time. Open-source tooling defaults to OpenAI compatibility.

Agent infrastructure: The Responses API plus Agents SDK represents the most complete, production-tested agentic development platform available from any provider. Computer use, built-in tools, multi-agent orchestration, and trace observability are all first-class features rather than afterthoughts.

Cost routing architecture: The model tier cascade (Nano to Mini to GPT-5 to GPT-5.2 to GPT-5.4) allows granular cost optimization. Routing 80% of requests to cheap tiers and reserving expensive models for complex tasks reduces effective cost more aggressively than any single-model approach.

Coding performance: SWE-bench leadership at flagship tier, Codex CLI for autonomous development, and native computer use for end-to-end coding agents make OpenAI the strongest platform for developer tools.

Max output tokens: GPT-5.4’s 128K output token limit enables generating very long, coherent documents in a single API call, a structural advantage for comprehensive report generation, detailed code output, and long-form content creation.

OpenAI API Cons

Smaller flagship context window: 1M tokens versus Gemini’s 2M means more chunking engineering for truly large document workflows.

No persistent free tier: The $5 credit expires. Prototyping and early-stage development always requires a paid account for sustained use.

Latency at the flagship tier: GPT-5.4’s increased capability comes with increased latency versus Gemini Flash-Lite. For latency-sensitive real-time applications, careful model selection is necessary.

Ecosystem lock-in at the margins: The Responses API is powerful but not yet replicated by alternative providers, creating migration overhead if you build deeply on its agentic features.

Gemini API Pros

Largest context window: 2M tokens on Gemini 3.1 Pro is the industry maximum. No chunking required for document processing workloads that would push GPT-5.4 to its limit.

Persistent free tier: Real, ongoing access to production-quality models without payment. The most generous free tier in the industry by a significant margin.

Native video understanding: Analyzing hours of video content natively is a capability OpenAI does not currently match through its API.

Scientific reasoning: GPQA Diamond benchmark leadership reflects genuinely stronger performance on research-grade analytical tasks.

Google ecosystem integration: For organizations on Google Cloud, the integration with BigQuery, Workspace, Search grounding, and Vertex AI eliminates entire categories of plumbing work.

Flash model speed: Gemini 3.1 Flash-Lite’s sub-200ms response latency is the fastest production option available.

Gemini API Cons

Long context pricing cliff: The 2x pricing surcharge above 200K tokens for Pro models means large-context processing costs more than the headline rate suggests.

Max output tokens: Most Gemini models top out at 8K output tokens, requiring multiple API calls to generate very long single documents, an engineering constraint that OpenAI’s 128K output ceiling eliminates.

Less mature agent ecosystem: The Google ADK and Gemini’s agent tooling are capable but have less production deployment experience, fewer third-party integrations, and less community documentation than OpenAI’s agent stack.

Ecosystem dependency for advanced features: Native Google Search grounding, BigQuery integration, and Workspace connectivity are valuable but only for Google Cloud users. Teams outside the Google ecosystem cannot access these advantages.

Audio surcharges: The 2x to 7x audio input surcharge versus text input pricing significantly changes the economics for voice-heavy applications.

Model Routing and Hybrid Architecture in Practice

The smartest teams in 2026 are not asking “OpenAI or Gemini.” They are asking “how do we route each request to the optimal model from either provider?”

Why Hybrid Routing Makes Sense

No single model is optimal for every request in a multi-purpose application. A customer-facing chatbot might need fast, cheap responses for simple FAQ queries (Gemini Flash-Lite at $0.075/million), coherent conversational responses for standard support interactions (GPT-5 Mini at $0.25/million), deep reasoning for complex troubleshooting (GPT-5.2 at $1.75/million), and document analysis when the user uploads a PDF (Gemini 2.5 Pro for long-context processing).

Running all four request types through a single mid-tier model overpays for simple requests and underperforms on complex ones. Routing by complexity and task type reduces costs by 20% to 40% while improving output quality where it matters.

LiteLLM for Provider Abstraction

LiteLLM presents a unified OpenAI-compatible API that proxies to both providers. Configuration changes (not code changes) switch models. With LiteLLM you can set fallback routing so that if OpenAI returns a 429 rate limit error, requests automatically route to Gemini. You can configure cost-based routing where requests under 5,000 tokens go to Flash-Lite and requests over 50,000 tokens go to Gemini 2.5 Pro. You can run A/B tests between models without modifying application code and track spend per model per user cohort in real time.

This architecture eliminates hard lock-in to either provider without adding significant latency. LiteLLM’s proxy overhead is under 10ms for local deployments.

Example Routing Architecture for a SaaS Product

| Request Type | Recommended Model | Estimated Cost per 1K Requests |

|---|---|---|

| Intent classification | GPT-5 Nano | $0.005 |

| Standard chat response | GPT-5 Mini | $0.035 |

| Complex analysis | GPT-5 | $0.28 |

| Document summarization (over 100K tokens) | Gemini 2.5 Pro | $0.25 |

| Batch content generation (async) | Gemini 2.5 Flash (Batch) | $0.035 |

| Real-time coding assistance | GPT-5.2 | $0.49 |

A product routing requests across these tiers spends roughly 80% less than a product running everything through GPT-5.2.

Recent Developments (Q4 2025 to March 2026)

OpenAI Developments

The OpenAI API in early 2026 is fundamentally different from what it looked like twelve months ago. The Responses API, launched in March 2025, has become the recommended integration path for all new projects. It unified the fragmented Chat Completions and Assistants API surfaces and added native agent capabilities that previously required significant engineering overhead.

GPT-5.3 Codex was released to the Responses API, introducing the current benchmark leader for terminal-based coding automation. GPT-5.4 added native computer use via the Responses API: an accessible capability to see, click, and type in desktop applications. This represents a category expansion. Agents are no longer limited to text and code outputs but can interact with any software that has a graphical interface.

The Assistants API deprecation announcement (sunset August 26, 2026) is operationally significant for teams still using it. Migration to the Responses API is well-documented and provides equivalent or better capabilities, but teams that have not started migration should prioritize it.

Prompt caching efficiency improved significantly in the Responses API: 40% to 80% better cache utilization versus Chat Completions reduces effective costs for most workloads without any code changes.

Gemini Developments

Google’s model release cadence accelerated through late 2025 and early 2026. Gemini 2.5 Pro (June 2025) provided a mid-tier option matching GPT-5 on both capability and pricing. Gemini 3 (late 2025) brought native image generation, text-to-speech, and significant reasoning improvements. Gemini 3.1 Pro (February 2026) pushed the frontier further with a 2M token context window and 94.3% GPQA Diamond, currently the highest publicly reported score on that benchmark.

Google’s free tier expansion has been consistent, with six models now available without payment, making Gemini the most accessible major provider for developers building without initial budget.

The Vertex AI Model Optimizer, launched in 2025, automatically routes requests to the appropriate Flash or Pro model based on a cost/quality/balance preference configuration. For enterprise Google Cloud customers, this simplifies model selection without requiring per-request routing logic.

Market Dynamics

The AI API market in 2026 is more competitive than at any prior point. DeepSeek V3.2 and R1 have established that frontier-quality inference is achievable at dramatically lower cost, pushing all major providers to accelerate price reductions. xAI’s Grok leads on pure per-token cost efficiency at $0.20/$0.50 for its flagship. This competitive pressure benefits all developers: prices have dropped dramatically from GPT-4 era rates.

The convergence of mid-tier pricing between OpenAI (GPT-5 at $1.25/$10.00) and Google (Gemini 2.5 Pro at $1.25/$10.00) reflects this competitive equilibrium. Differentiation has shifted from price to ecosystem, tooling, context size, and specialized capabilities.

Real-World Developer Scenarios

Scenario 1: Startup Building a B2B SaaS Chatbot

Context: 2,000 daily active users, each session averaging 15 turns, mixed simple FAQ and complex troubleshooting queries. Budget is a primary concern. Team has 2 engineers.

OpenAI approach: Route 60% of requests to GPT-5 Mini ($0.25/$2.00), 35% to GPT-5 ($1.25/$10.00), 5% to GPT-5.2 ($1.75/$14.00) for the most complex issues. Enable prompt caching on the system prompt (10,000 tokens saved per session). Estimated monthly cost: $800 to $1,200.

Gemini approach: Use Gemini 2.5 Flash ($0.30/$2.50) for most requests, Gemini 2.5 Pro ($1.25/$10.00) for complex reasoning. Prototype on free tier. Estimated monthly cost: $600 to $900.

Decision: Start on Gemini free tier for development. Deploy to Gemini 2.5 Flash for production. Once you have product-market fit and the team grows, implement LiteLLM routing to add OpenAI models for response quality A/B testing. The free tier for development is worth the minor ecosystem difference at this stage.

Scenario 2: Developer Tool Company Building a Coding Assistant

Context: Product embedded in VS Code, needs accurate code generation, debugging support, multi-file context awareness. 500 paying developers.

OpenAI approach: GPT-5.2 as the primary model for code generation, Codex CLI integration for autonomous agent features. GPT-5 Nano for quick token-count estimation and simple completions. Monthly cost at 500 users averaging 100K tokens each: roughly $750 for mix-tier routing.

Gemini approach: Gemini Code Assist free tier for individual developers covers most use cases. Gemini 2.5 Pro for codebase-level analysis.

Decision: OpenAI for serious production coding products, particularly if you need autonomous agent capabilities, SWE-bench-quality output, or computer use. Gemini Code Assist is a strong option for budget-constrained individual developer tools.

Scenario 3: Enterprise Legal Tech Company

Context: Processing entire case files (200 to 500 pages), contract comparison across multiple documents, regulatory compliance analysis. Customers are law firms.

OpenAI approach: GPT-5.4 for its 1M context window, no tiered surcharges. A standard 200-page contract (roughly 80,000 tokens input, 5,000 tokens output) costs approximately $0.85 per document.

Gemini approach: Gemini 3.1 Pro for its 2M context window, native document ingestion. Same 200-page document at Gemini 3 Pro pricing ($2.00/million input) costs approximately $0.16 for input, $0.15 for output = $0.31 per document, significantly cheaper. For multi-document analysis requiring 300K+ tokens simultaneously, Gemini’s 2M context handles it without the 2x pricing cliff until the 200K boundary.

Decision: Gemini decisively for document-processing enterprise applications. The cost difference is substantial, the context advantage is structural, and Vertex AI compliance coverage satisfies enterprise requirements.

Scenario 4: AI-Native SaaS at Scale (10M Tokens/Month)

Context: Funded product, real revenue, 10 million tokens/month across diverse request types. Need to minimize burn.

Using intelligent tier routing with LiteLLM:

- 40% simple requests: GPT-5 Nano at 4M tokens = $0.20 input + $0.48 output = $0.68

- 40% standard requests: GPT-5 Mini at 4M tokens = $1.00 + $2.40 = $3.40

- 20% complex requests: GPT-5 at 2M tokens = $2.50 + $6.00 = $8.50

- Total: ~$12.58/month with 50% Batch API on 30% of requests, effective ~$10.50/month

Versus running everything on GPT-5: $12.50 + $30.00 = $42.50/month.

Routing saves 75% of costs at this scale.

Community Insights

Developer communities on Reddit, Hacker News, and developer forums provide ground-level perspective that complements benchmark data.

What Developers Say About OpenAI

Positive sentiment consistently cites reliability, documentation quality, and ecosystem maturity. Production teams working on critical systems prefer OpenAI because the failure modes are well-understood and the community knowledge base for debugging is deep. The Responses API launch was received positively by agent developers who found the Assistants API complex to work with.

Negative sentiment focuses on pricing unpredictability at scale, particularly output-heavy workloads that can generate unexpectedly large bills. Rate limiting during growth phases generates consistent complaints, particularly from teams whose usage spikes around product launches or viral moments. Some developers note that streaming reliability has occasionally been inconsistent.

What Developers Say About Gemini

Positive sentiment consistently highlights the free tier, the context window for document processing, and the speed of Flash models. Developers building on Google Cloud report that the integration is genuinely seamless: data flows between BigQuery, Gemini, and Vertex AI without custom plumbing.

Negative sentiment cites latency variability on Pro models, the relative immaturity of the agent tooling ecosystem compared to OpenAI, and occasionally inconsistent structured output reliability. Some developers note that the API has changed enough between model generations that code written for Gemini 1.5 required meaningful updates for 2.5 and 3.x.

Key Pain Points Across Both Platforms

Pricing unpredictability: Both platforms require careful monitoring to avoid unexpected cost spikes. Multi-turn conversation history growth and output-heavy workloads are the most common sources of cost surprises. Setting hard limits via the billing API and implementing token monitoring are table stakes for production applications. Google recently introduced project-level spend caps in AI Studio to give developers more transparency and control.

Rate limit timing: Neither provider makes it easy to predictably scale through rate limit tiers during rapid growth periods. Building exponential backoff, jitter, and fallback routing (ideally to the other provider via LiteLLM) is necessary for production reliability.

Structured output consistency: Both platforms have improved JSON mode reliability significantly, but production applications still benefit from output validation layers that catch and retry malformed structured outputs rather than propagating parse errors.

Final Matchup Table

| Category | Winner | Why |

|---|---|---|

| Pricing (budget tier) | Gemini | Free tier is persistent; Flash-Lite is cheapest production model |

| Pricing (mid tier) | Tied | Both at $1.25/$10.00 per million tokens |

| Pricing (at scale with routing) | OpenAI | Nano/Mini cascade enables more granular cost optimization |

| Speed / Latency | Gemini | Flash-Lite sub-200ms; Flash family fastest production models |

| Reasoning (general) | Tied | GPT-5.4 leads SWE-bench; Gemini 3.1 Pro leads GPQA Diamond |

| Coding (production tools) | OpenAI | Codex CLI, computer use, SWE-bench at GPT-5.4 tier |

| Coding (free tools) | Gemini | Code Assist is free and backed by Gemini 2.5 Pro |

| Context Length | Gemini | 2M tokens vs 1M; handles larger documents without chunking |

| Max Output Tokens | OpenAI | 128K vs 8K enables very long single-response generation |

| Multimodal (understanding) | Gemini | Native video, deeper multimodal reasoning |

| Multimodal (action) | OpenAI | Computer use, image generation via Responses API |

| Agent Ecosystem | OpenAI | Responses API + Agents SDK + Codex CLI = mature stack |

| Developer Experience | OpenAI | Documentation, SDK ecosystem, community knowledge base |

| Ecosystem (Google Cloud) | Gemini | BigQuery, Workspace, Search grounding native integration |

| Enterprise Readiness | Tied | Azure OpenAI vs Vertex AI both satisfy enterprise compliance |

| Free Tier | Gemini | No contest: persistent access to six production models |

| Document Processing | Gemini | 2M context + Google Cloud native = best enterprise document stack |

| Voice Applications | OpenAI | Realtime API GA, lower latency, mature audio pipeline |

No single platform wins across every category. The table reflects specialized strengths: OpenAI wins on action-oriented capabilities, developer ecosystem, and agent infrastructure. Gemini wins on context scale, document processing, free access, and Google ecosystem integration. The right choice is determined by which categories matter most for your specific workload.

FAQs

Is OpenAI API better than Gemini API in 2026?

Neither is universally better. They are optimized for different workloads. OpenAI leads for coding tools, agent automation, and developer ecosystem maturity. Gemini leads for document processing, video understanding, long-context applications, and free-tier access. For most SaaS products and startups, both are viable starting points, and the most advanced teams use both through routing layers.

Is Gemini API cheaper than OpenAI API?

It depends on the workload. Gemini’s free tier is entirely free with no expiration. At the budget tier, Gemini 2.0 Flash-Lite ($0.075/$0.30) is cheaper than GPT-5 Nano ($0.05/$0.40) on input but comparable on output. At the mid-tier, both platforms offer $1.25/$10.00 per million tokens. At scale with intelligent routing, OpenAI’s model cascade can be cheaper. For large-context document processing under 200K tokens, Gemini is competitively priced. Above 200K tokens, the 2x pricing step changes the calculation entirely.

Which API has a better free tier: OpenAI or Gemini?

Gemini, by a wide margin. Google provides persistent free access to six production models with 1,000 requests per day and no expiration. OpenAI provides $5 in temporary credits that expire after 3 months. For developers prototyping or building early-stage products, Gemini’s free tier enables production-equivalent testing without financial commitment.

OpenAI API vs Gemini API for coding: which is better?

For serious production coding tools (IDE integration, autonomous code agents, debugging systems), OpenAI is stronger. GPT-5 scores 74.9% on SWE-bench Verified and Codex CLI provides autonomous terminal coding. Gemini Code Assist is free and performs well for individual developers doing standard tasks, and Gemini’s 2M context window handles large codebase analysis better than GPT-5.4’s 1M window.

Which API has a better context window: OpenAI or Gemini?

Gemini, at the flagship level. Gemini 3.1 Pro offers a 2-million-token context window versus GPT-5.4’s 1-million-token window. However, Gemini Pro models charge 2x the input price for contexts exceeding 200K tokens. The cost advantage of Gemini’s larger window is only real when you need contexts between 1M and 2M tokens, or when you need to avoid chunking for documents in the 200K to 1M range and can absorb the price step.

Which API is faster: OpenAI or Gemini?

For pure speed, Gemini Flash-Lite delivers sub-200ms latency for typical requests, the fastest production option from either provider. OpenAI’s GPT-5 Mini and Nano are also fast, but Gemini’s Flash family consistently outperforms on time-to-first-token benchmarks. For real-time applications and voice assistants, start with Gemini Flash. For coding assistants and analysis tools where a few hundred milliseconds is acceptable, OpenAI’s quality-per-latency tradeoff often wins.

OpenAI API vs Gemini API for SaaS applications?

OpenAI is the stronger default for SaaS. The model tier cascade enables aggressive cost optimization across diverse request types. The Responses API simplifies multi-turn conversation management. The ecosystem of monitoring, observability, and integration tools is mature. Gemini is a strong alternative for SaaS products in early stage (free tier) or for applications with document-heavy or Google Cloud-native requirements.

Gemini vs OpenAI for AI agents: which is better?

OpenAI’s Responses API and Agents SDK represent the current state of the art for production agent development. Built-in tools (web search, computer use, file search, code interpreter), multi-agent orchestration, tracing, and stateful sessions are mature and well-documented. Gemini’s Google ADK is capable for Google Cloud-native workflows and has native Search grounding as a genuine advantage. For general-purpose agents with external tool integration, OpenAI is the safer production choice. For agents that are primarily data-heavy and work within Google’s ecosystem, Gemini competes effectively.

Which API is better for processing long documents?

Gemini, clearly. The 2M token context window on Gemini 3.1 Pro handles entire legal case files, full research corpora, and multi-document sets without chunking. GPT-5.4’s 1M window is also substantial, but for the most demanding document workloads (entire codebases, multi-contract legal reviews, book-length research documents), Gemini’s context headroom provides a structural engineering simplification that is worth the cost premium.

Should you use both OpenAI and Gemini APIs together?